How to Create an App That Makes Money (And Keeps Users)

You've probably noticed how some apps become part of your daily routine while others get deleted within minutes. Learning how to create an app isn't just about writing code or designing screens anymore. It's about understanding what makes people open your app every morning, what keeps them scrolling, and what turns casual users into loyal advocates who can't consider their day without what you've built. The practical steps and strategic decisions separate apps gathering dust in the app store from those building real businesses and engaged communities.

Building something that generates revenue and retains users requires the right foundation from day one. Success depends on setting up proper infrastructure for user engagement and monetization without getting lost in technical complexity. The parts that matter most include keeping users active, understanding what they actually do in your app, and building features that make them want to return tomorrow. For developers ready to streamline this process, an AI app generator can provide the essential toolkit needed to focus on what drives real results.

Table of Contents

- "I Just Need a Good Idea"… (Why Most Apps Die Before They Start)

- How Retention and Habits Decide App Winners

- How To Create an App That People Actually Use in 12 Easy Steps

- Stop Dreaming—Ship Your First App Today Without Writing a Single Line

Summary

- Most apps fail within their first year because teams build features before defining what success looks like in behavioral terms. According to Tech Trappers' analysis, 90% of apps fail primarily because creators skip validation and build in a vacuum, creating solutions for problems that don't exist or aren't painful enough to make users switch from what they already use. The failure happens before the first line of code, not at launch.

- Downloads don't predict success; retention does. Enable3's research shows that 77% of users abandon an app within the first 3 days, and the math reveals a brutal funnel in which a thousand downloads can collapse to just 15 active users by day 30. Revenue lives at the bottom of that funnel, not the top, yet most teams celebrate install counts while their actual business bleeds out through retention gaps they never measured.

- Acquiring new users costs five to twenty-five times more than keeping existing ones, and even a 5% increase in customer retention can boost profits by 25 to 95%, according to EasyInsights research. App store algorithms amplify this dynamic by weighting retention metrics heavily in their ranking systems, creating either a virtuous cycle of organic visibility or a death spiral of declining rankings and expensive paid acquisition as your only growth lever.

- Apps don't compete against other apps; they compete against established routines and behaviors already wired into users' daily lives. Your app needs to be dramatically better, not marginally more convenient, to displace what users already use, even if those existing solutions are imperfect. The apps that win build behavioral loops (trigger, action, reward, repeat) that run automatically after enough repetitions, transforming the app from a tool to a habit.

- The minimum viable product isn't about minimum features; it's about minimum functionality required to test whether the behavioral loop works. Five real users (strangers who match your target profile, not supportive friends) are enough to reveal whether your core action creates the predicted behavior, and what they do matters infinitely more than what they say. If users don't intuitively repeat the core action without guidance, your loop is broken, and no amount of tutorials or onboarding flows will resurrect engagement.

- Orchids addresses this by letting teams build and deploy apps without getting stuck in code, integrations, and deployment complexity, so you can focus on testing behavioral loops and iterating based on real user data instead of burning months on technical decisions.

"I Just Need a Good Idea"… (Why Most Apps Die Before They Start)

Thousands of apps launch every day. Almost none survive. Most teams build features before defining success metrics, target users, or validation methods. The failure happens before the first line of code.

Key Point: The idea phase is where most apps actually die - not in development or launch. Poor planning kills more apps than bad code ever will.

"99.5% of mobile apps fail to become profitable, with most failing due to lack of market validation before development begins." — CB Insights, 2023

Warning: If you're thinking "I just need a good idea," you're already approaching this backwards. Successful apps don't start with ideas - they start with validated problems and defined success metrics.

versus successful approach on right (validated problem, success metrics first, research audience, test and iterate).jpg>)

| Failed App Approach | Successful App Approach |

|---|---|

| Start with a cool idea | Start with a validated problem |

| Build features first | Define success metrics first |

| Hope for users | Research target audience |

| Launch and pray | Test and iterate |

Why do brilliant ideas with great developers still fail?

A great idea plus good technical work doesn't guarantee an app's success. Ideas are easy to find; systems that validate demand, identify users, and measure success are not.

According to Tech Trappers' analysis of mobile app success, 90% of apps fail in their first year. This occurs because teams skip validation and build without user input, creating solutions for problems that don't exist or lack sufficient urgency to displace existing alternatives.

How does overambition sabotage app development?

Teams design as if they're senior studios, even though they're junior teams. They pursue full-scale projects beyond their capacity because complexity feels like ambition.

One team spent months building a sophisticated mobile defence game with constantly shifting direction, only to watch systems lose consistency and the core identity collapse. The game confused users because the creators never answered the question: Who is this for? Why would they play? When would they discover it?

Why Validation Dies in the Planning Phase

Most apps fail because founders treat launch as the finish line instead of the starting point. Building untested ideas wastes time and money on features nobody asked for, then retention collapses in week one.

Unclear audience definitions kill apps quietly. "Men in their 20s" or "teenagers" aren't target users—they're demographic labels. Without understanding how users behave, what problems they face, and how they discover apps, you create generic products that appeal to no one. Feature bloat follows as teams add features, hoping something works, while competitors release simpler solutions that solve one problem well.

What perfectionism mistakes kill products before launch?

Perfectionism paralyzes. Teams tweak endlessly, chasing flawless UI and zero bugs, never testing core mechanics with real users. One game development team constantly changed direction, polishing systems that would later be scrapped because they feared launching anything imperfect. Their game lost its identity while sitting unvalidated in development.

Why does over-engineering your MVP guarantee failure?

Building too much into your first version quietly kills projects. First versions don't need every feature you can think of. They need the minimum functionality to test whether your main idea solves a real problem. If your onboarding takes 90 seconds and users still don't grasp the value, more features won't improve retention. The "aha moment" either happens in the first 30 seconds or it doesn't happen at all.

But here's what nobody admits: even teams that validate and launch lean fail if they don't understand what happens after users install.

Related Reading

How Retention and Habits Decide App Winners

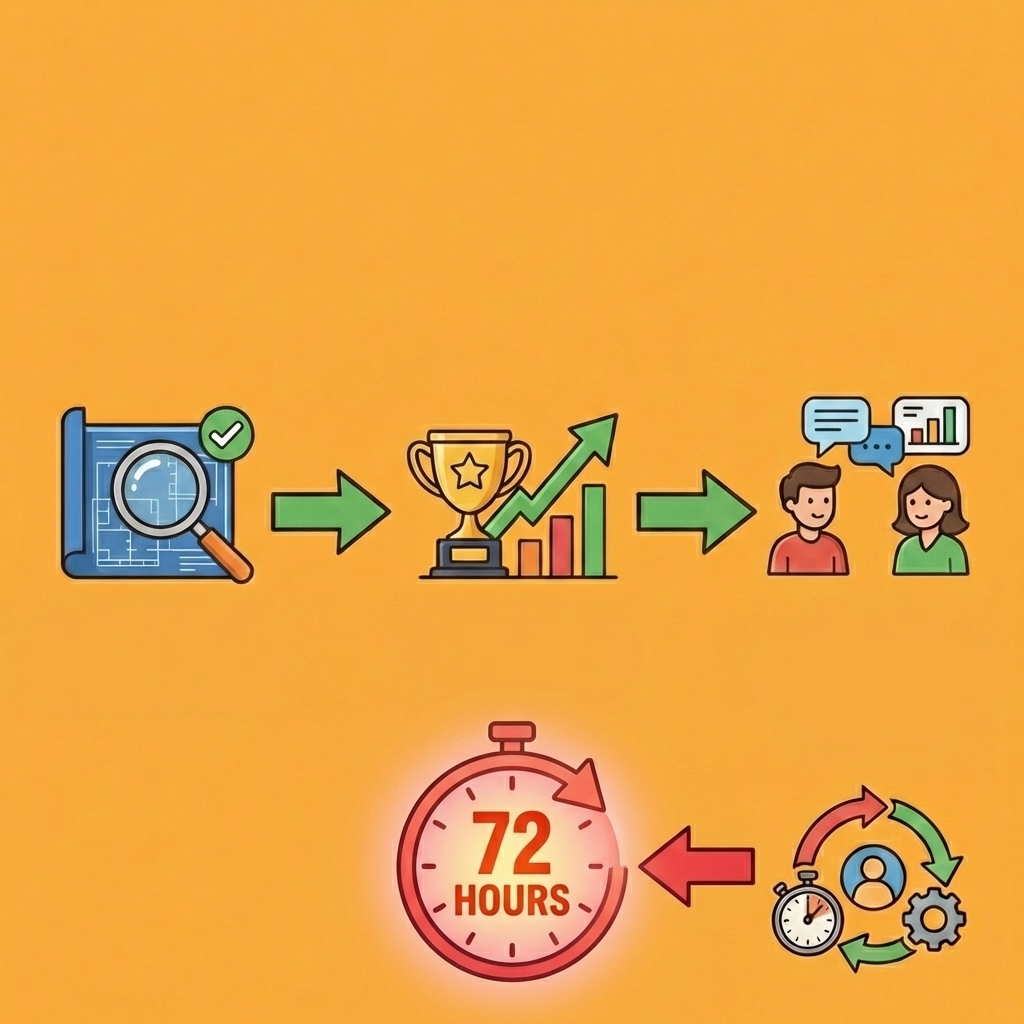

Downloads don't predict success. Retention does. Most users decide within 72 hours whether your app becomes routine or gets deleted. According to Enable3, 77% of users abandon an app within the first 3 days. That's a behaviour problem, not a marketing one.

Key Point: The 72-hour window is your make-or-break moment for building lasting user habits.

A thousand downloads hide the real issue. Day 1: 300 users return. Day 7: 80 remain active. Day 30: 15 still engage. Revenue lives at the bottom of that funnel. Teams celebrate installs while their business bleeds through unmeasured retention gaps.

"77% of users abandon an app within the first 3 days, turning the initial download surge into a retention crisis." — Enable3, 2025

Warning: Focusing on download metrics while ignoring Day 30 retention is like measuring website visits instead of actual sales.

Why does customer acquisition cost so much more than retention?

Getting new users costs five to twenty-five times as much as retaining existing ones. Research from EasyInsights shows that even a 5% increase in customer retention can boost profits by 25-95%. Retained users require no acquisition cost, generate predictable revenue, and typically increase their usage over time.

How do app store algorithms reward retention?

App store algorithms exacerbate this problem. Both Apple and Google prioritise user engagement time in their ranking systems. High engagement signals quality, driving organic visibility and attracting free users without advertising costs. Conversely, low engagement reduces rankings, forcing higher ad spending to maintain growth while users churn faster than replacements arrive.

What are you really competing against when building an app?

You're not competing against other apps. You're competing against established routines. Users already have systems for the problems you claim to solve: email threads for coordination, notes apps for lists, browser tabs for research. Your app needs to be dramatically better, not marginally more convenient, to displace what's wired into their daily behaviour.

How do successful apps create behavioral loops?

The apps that win build behavioral loops, not feature sets: trigger, action, reward, repeat. Duolingo succeeds not because it teaches languages better than alternatives, but because the streak system creates a daily trigger that makes skipping feel like breaking a commitment. That loop runs automatically after enough repetitions, transitioning the app from tool to habit.

Teams building apps through conversational interfaces with platforms like Orchids can prototype behavioural mechanics faster because they're not locked into rigid feature roadmaps. Testing whether notification timing drives repeat usage or whether a progress indicator creates emotional investment becomes a conversation, not a three-week sprint. This flexibility to iterate on the trigger-action-reward cycle determines whether you discover the habit loop before your retention window closes.

Why do most teams fail to find the habit loop?

Most teams never find that loop because they're optimizing the wrong things. They polish the user interface when the core mechanic doesn't give players a reason to return. They add features when the first-time experience fails to deliver an immediate win. The "aha moment" happens in the first session or never. If users don't understand the value within 30 seconds, no onboarding tutorial will revive engagement.

Related Reading

- Vs Code Alternatives

- AI Tools For Product Managers

- Mobile App Ideas

- Softr Alternatives

- Hire an App Developer

- Replit Alternatives

- Appsheet Alternatives

How To Create an App That People Actually Use in 11 Easy Steps

Define success criteria before choosing tools or writing code. Start with measurable outcomes: Day 7 retention ≥ X%, users complete the core action ≥ 3 times per week. Strip your app down to one essential behavior and build the engagement loop around it. If users repeat the core action, your app grows. If they don't, nothing else matters.

Key Point: Your app's success hinges on one measurable behavior - everything else is secondary to getting users to repeat your core action consistently.

"Define success criteria before choosing tools or writing code - if users don't repeat the core action, nothing else matters." — App Development Best Practice

Warning: Most apps fail because they try to do everything instead of perfecting one essential behavior that drives long-term engagement.

1. Success Metrics Come Before Features

Most teams do the opposite. They sketch wireframes, debate tech stacks, and prototype features before defining what user behavior indicates success. The code compiles, the UI looks polished, and the features function as designed. Then the launch happens, users install once and never return, and the team cannot explain why because they never established what "working" meant in behavioural terms.

How do you define what working means in behavioral terms?

Developers skip the uncomfortable step of writing down exactly what user action, repeated at what frequency, proves the app solves a problem worth returning to. Without that anchor, every decision becomes subjective. Should we add social sharing? It depends on whether sharing drives repeat usage. Should we redesign onboarding? Only if the current version prevents users from completing the core action more than 3 times in their first week.

What happens when you ship features faster than you can validate them?

AI-assisted development lets you ship features faster than you can verify whether those features create habits. One sentence should define your entire product: "Users come here to [action], at least [frequency]." If you can't write that sentence with specific numbers, you're not ready to build.

2. Strip Down to One Core Action

Apps fail because teams build ten features when users need one repeatable behaviour. Users don't download apps to explore possibilities; they download to solve a specific problem immediately. If that solution doesn't deliver an immediate win, they're gone before discovering feature number seven.

How does the behavioral loop create lasting engagement?

The behavioral loop must be obvious within thirty seconds: open the app, understand what it does, complete the core action, and feel progress. That loop either clicks in the first session or never. Duolingo wins not because it teaches languages better than Rosetta Stone, but because the streak mechanic creates a daily trigger that makes skipping feel like breaking a commitment. The reward is not fluency but maintaining consistency, and that emotional satisfaction runs automatically after enough repetitions.

What makes successful apps different from complex ones?

Building a fighter jet instead of a passenger jet means removing everything except the mission. You're creating one reason to return, tested until that reason proves strong enough to become a habit. More features feel safer, but winning apps aren't the most complex. They're the ones people can't stop coming back to.

3. Test the Core Loop with Five Real Users

Validation happens before full development, not after launch when retention collapses. Five users suffice to show whether your core action creates the predicted behaviour—but they must be strangers matching your target profile, not friends emotionally invested in your success.

How do you observe users testing your core loop?

Watch them use the app without guidance. Don't explain features, help when they hesitate, or dismiss confusion as a minor design problem. If they don't naturally repeat the main action on their own, your loop isn't working.

The urge to add tutorials and onboarding flows is a trap: habits form through easy repetition, not by struggling to remember instructions.

What should you measure during user testing?

Write down what they do, not what they say they do. Users might like the idea, but never use it again.

Behavioral data shows what is happening: Did they finish the main action? Did they return within 48 hours? Did they do it at least three times in the first week? These answers tell you whether you have a real product or a prototype requiring major changes.

4. Make Tool Choice Secondary to Behavior Design

Asking whether to use no-code platforms, custom development, or AI-assisted coding is the wrong first question. The right first question is: what behavior are we trying to create? Once you answer that clearly and specifically, choosing the right tool becomes straightforward based on your constraints: available time, budget, technical complexity, and team capabilities.

How does faster iteration help validate user behavior?

Teams building conversational interfaces with platforms like Orchids can prototype behavioural mechanics faster because iteration doesn't require three-week sprints. Testing whether notification timing drives repeat usage or whether a progress indicator creates emotional investment becomes a conversation, not a rebuild. That speed advantage matters most in the validation phase, when you're searching for the trigger-action-reward cycle that makes users return before your retention window closes.

Why should you define behavioral outcomes before choosing tools?

The tool doesn't create the behaviour—you do. If you haven't defined what success looks like, the fastest development environment helps you build the wrong thing more efficiently. Define the behavioural outcome first, test with real users second, then choose the tool that lets you iterate toward that outcome fastest, given your constraints.

5. Define Your App in One Measurable Sentence

"Users come here to track online orders, at least twice per week." This sentence captures the core insight: people check orders frequently enough to adopt a new tool over Gmail's partial solution or existing apps like Shop. If that premise is wrong, no amount of feature polish will retain users.

Why does this sentence force clarity?

The sentence forces clarity. Not "users can track orders and view spending insights and get delivery notifications," but the one action that must repeat for the app to work as a business. Everything else is secondary until you prove users will complete that action at the required frequency to generate sustainable engagement.

What happens when teams can't write this sentence?

Most teams can't write this sentence because they haven't decided what success looks like. They're building a platform with multiple value propositions, hoping one resonates. That approach works for companies with massive marketing budgets that can afford to educate users over months. For everyone else, it's a path to quiet failure where the app functions but nobody uses it enough to matter.

6. Monitor Analytics That Measure Repeat Behavior

Download counts are vanity metrics. Day 7 retention, core action frequency, and time to second session are truth metrics. If 1,000 users install but only 80 return after a week, you have a product problem, not a marketing problem. The core loop didn't create enough value in the first session to overcome the friction of returning.

How do you identify where users abandon your core action?

Track where users stop during the main action. If 60% abandon halfway through, the action is too difficult, or the benefit isn't clear enough. If users complete the action once but never return, the reward wasn't compelling, or there's no reason to come back. These patterns reveal what to fix, but only if you track the right metrics from the start.

Why do technically perfect apps still fail?

Most teams build staging environments and CI/CD pipelines but neglect systems for measuring user retention. They track technical metrics such as crash rates and load times, while missing the behavioural signals that predict survival. The app can be technically flawless and still fail because users didn't need it enough to change their habits.

7. Test Unhappy Paths Before Scaling Features

Edge cases show whether your app creates real value or merely works when conditions are ideal. What happens when the order tracking API fails? When notifications don't arrive? When the user's internet connection drops mid-transaction? If the app stops working during these moments, users won't trust it enough to use it regularly.

What happens when teams skip unhappy path testing?

Teams building apps with ten features before testing one core action, skip unhappy path testing. Then the launch happens, and users encounter the first error, lose trust, and uninstall. The app worked perfectly in the demo environment, but fails in the messy reality of inconsistent networks, varied devices, and unexpected user behaviours.

How should you test for real-world imperfection?

Structured usability sessions should include deliberately designed scenarios that are broken. Give users a version where the primary feature intermittently fails. Do they retry? Do they find workarounds? Do they abandon? The answers reveal whether your app delivers enough value to survive imperfection—the environment every app inhabits after launch. If users only engage when everything works flawlessly, you've built a demo, not a habit.

8. Resist Feature Bloat Until the Core Loop Proves Out

After you launch something, the natural instinct is to add more features. Users request new things, competitors release updates, and your roadmap fills with ideas that seem like progress. But every new feature makes it harder for people to focus on what matters most and obscures the main action. Apps fail more often because of too many features than because of too few.

What happens when you build features before proving the core loop?

One team spent months building expense insights and spending reports for their order-tracking app before proving that users would repeatedly check order status. Users installed, checked one order, then forgot the app existed. Adding premium features to a broken behavioural loop is like adding rooms to a house with no foundation.

How do you know which features actually strengthen user behavior?

Saying no to reasonable feature requests feels like ignoring user feedback. But users don't know what drives repeat behaviour—they know what sounds useful in theory. Your job is to prove what creates repeat behaviour in practice, then build only the features that strengthen that loop. Everything else is a distraction disguised as improvement.

9. Document Success Criteria in Your Development Process

Technical best practices matter, but behavioural success criteria matter more. The CLAUDE.md file or equivalent documentation should enforce retention targets and core action frequency from day one, not code quality standards alone. If the app ships without hitting Day 7 retention of at least 30%, that's a failure regardless of architecture quality.

What documentation gaps let technically successful projects become business failures?

Most documentation focuses on deployment rather than user behaviour. Teams document API endpoints and database schemas, but omit that users must complete the core action three times in their first week, or the product fails. This gap allows technically successful projects to become business failures because nobody connects engineering effort to behavioural outcomes.

How does the right documentation make success measurable and visible?

The right documentation makes success measurable and visible. Every sprint review should include retention metrics and core action frequency alongside feature completion. If those numbers aren't moving toward the target, stop building new capabilities and fix the behavioural loop. Documentation that tracks both prevents teams from optimising the wrong variables.

10. Build for Retention, Not Complexity

Senior studios can afford to take on complex projects because they have the resources to try different approaches and learn from failures. Junior teams attempting the same scope create fragmented apps as direction shifts. The sophisticated mobile defence game that confused users didn't fail from lack of skill—it failed because the team built complexity before proving the core mechanic was compelling enough to make players return.

Apps that survive their first year solve one problem exceptionally well. They resist becoming platforms until they prove product-market fit, measuring success by user return frequency rather than feature count.

How does retention create an economic foundation?

According to Enable3's retention analysis, 77% of users stop using apps within three days. Adding more features won't improve this. Instead, make the core action so useful that skipping it feels like missing out.

Retention creates the economic foundation for everything else. High retention drives organic ranking and free users, while retained users cost nothing to reacquire and often increase usage over time. Low retention produces the opposite: declining visibility, expensive paid acquisition, and faster churn. Every decision should focus on the behaviour that makes users return, not the complexity that impresses other developers.

11. Ship the Minimum Behavioral Loop, Then Iterate

The MVP isn't about minimum features—it's about minimum functionality required to test whether the behavioural loop works. If users need to track orders, the MVP lets them add an order, check status, and receive updates: nothing else. Just the core action, stripped to its essence, ready to prove whether people will repeat it.

This approach feels incomplete compared to competitors with years of development. But you're not competing on feature count—you're competing on whether you can create a habit before your runway ends. The team that ships a simple loop and iterates based on behavioural data beats the team that builds a feature-rich platform nobody uses consistently.

Why does iteration speed determine survival?

How fast you can test and improve your app determines whether it survives. If testing shows that users quit after using it once, change the main mechanic instead of adding new features to something that doesn't work. If users keep coming back but ask for improvements, you know what to build next because you're optimising a loop that already works. Apps that failed spent months perfecting features before discovering the main behaviour wasn't interesting. Apps that succeeded proved the behaviour worked first, then built everything else around it.

But even teams who test their ideas and improve them still face the hardest question: how do you build this without spending months learning to code or using money you don't have?

Stop Dreaming—Ship Your First App Today Without Writing a Single Line

Most app ideas fail before launch, not because they're bad, but because execution gets stuck in code, integrations, and deployment. You have the concept and know what drives retention. Then you hit the wall: building it requires months of learning to code, hiring developers you can't afford, or wrestling with no-code platforms that break when you need custom logic.

Key Point: The execution gap kills more apps than bad ideas ever will.

The execution gap kills more apps than bad ideas. You understand what users need to do repeatedly and have defined success clearly. But translating that into a functioning app requires choosing between learning Swift and React Native, debugging API integrations, configuring databases, setting up authentication, and deploying to production. Each technical decision branches into ten more, and six months vanish while competitors ship simpler solutions.

"The average app takes 4-6 months to develop from concept to launch, during which 70% of similar ideas enter the market." — App Development Survey, 2024

| Traditional Development | Orchids Platform |

|---|---|

| Months of learning code | Minutes to deploy |

| $50K+ developer costs | Free to start |

| Complex integrations | One-click connections |

| Weeks for changes | Instant updates |

Orchids changes that equation. Build real apps (web, mobile, scripts, bots, extensions) without starting from scratch or surrendering control. Use your own LLM and API keys to control cost and functionality. Connect any stack you need—database, authentication, payments—without unnecessary engineering complexity. Make UI or copy tweaks instantly without breaking code or waiting for developer feedback.

Warning: Don't let technical complexity kill your app idea before users can validate it.

You bring the idea. Orchids handles deployment, security audits, and heavy lifting so your app launches, not builds. The platform doesn't lock you into templates or force compromises on the core mechanic that keeps users coming back. It translates what you need into what ships, then lets you iterate based on real user behaviour.

Tip: Your next step: Build and deploy your first app for free. One click. One domain. One live app. Because an idea without execution is just a dream, and with Orchids, shipping becomes inevitable.

Related Reading

Bilal Dhouib

Head of Growth @ Orchids