12 Best LLMs for Coding in 2026 (And How to Pick One Fast)

The race to ship better software faster has never been more competitive, and developers are searching for every advantage they can get. Choosing the right large language model for coding can transform how developers work, helping them write code faster, reduce bugs, and accelerate development projects. The challenge lies in cutting through the noise to find the best coding LLMs in 2026 and picking the right one quickly.

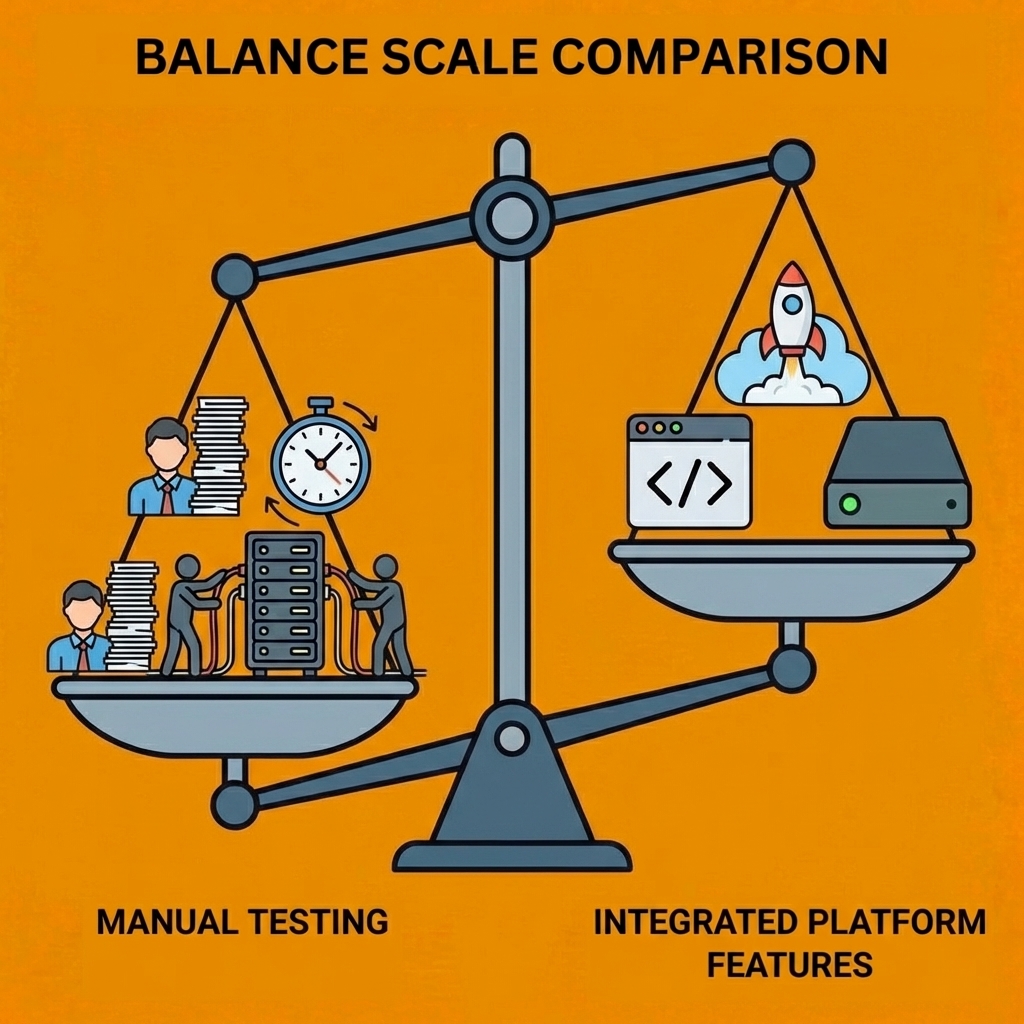

While comparing code generation models and testing different AI assistants takes time most developers don't have, there's a simpler path forward. Instead of manually evaluating each coding assistant, developers need a solution that understands project needs and generates working applications. This approach lets teams focus on building what matters rather than configuring what doesn't, which is exactly what Orchids's AI app generator delivers.

Table of Contents

- Are All Coding LLMs Equally Capable?

- How Coding LLMs Actually Perform (Mechanism and Benchmarks)

- 12 Best LLMs for Coding (Tools That Make Your Life Easier)

- How to Measure LLM Performance for Your Projects

- Stop Wasting Hours Debugging — Let AI Handle the Heavy Lifting

Summary

- Coding LLMs vary dramatically in real-world performance despite similar benchmark scores. DeepSeek-V3 achieved 65.2% on SWE-Bench Verified, while Claude 3.5 Sonnet scored 49.0%, demonstrating how architectural choices directly impact capability on production-level tasks. Specialized models like Codestral optimize for code syntax and structure, trading breadth for precision, while general-purpose models excel at conversational generation but stumble on multi-file debugging. Context window size determines whether a model can analyze how new functions interact with existing middleware, database schemas, and API routes across dozens of files, or forces you to manually chunk code and lose architectural insights.

- Standard benchmarks inflate performance through training data contamination. Top frontier models score 70%+ on SWE-Bench Verified but drop to roughly 23% on SWE-Bench Pro, the contamination-resistant variant sourced from complex real-world codebases. That 47-point gap reveals how much models memorize solutions to popular GitHub issues rather than learning to solve novel problems. Models identify faults in only 74% of debugging tasks, with performance dropping sharply when semantic-preserving mutations such as variable renaming are introduced. The gap between generating syntactically correct code and understanding how software actually runs in production environments remains wide enough to make fully autonomous development impractical beyond isolated functions.

- Model performance depends on the combination of the LLM and the tooling, not on the model alone. A model generating code in isolation cannot run tests, execute shell commands, configure Docker networking, or debug why package imports fail due to conflicting dependencies. WebDev Arena ranks models through head-to-head human preference voting, while LiveCodeBench continuously pulls new competition problems, preventing models from training their way to inflated scores. Chatbot Arena's blind Elo voting across coding tasks remains the most reliable proxy for real-world output quality because humans evaluate the final result rather than intermediate benchmark performance.

- Different models exhibit distinct failure modes across languages and frameworks. Research analyzing code generation benchmarks identified 114 tasks that LLMs consistently struggled with, revealing systematic blind spots in state mutation tracking, pointer arithmetic in memory-unsafe languages, and the correct implementation of backtracking algorithms with multiple exit conditions. A prompt that causes Claude to hallucinate package versions might work flawlessly in GPT-4o, while Gemini excels at SQL optimization but struggles with Rust memory management. Teams practicing model rotation, switching between LLMs for the same task to use whichever handles the specific context best, rather than committing to a single model for everything.

- Testing models in isolation misses how code actually ships to production. The fastest way to find which model works for your project is to build something real and measure whether you shipped faster than manually, not by reading more benchmarks. Most developers test LLMs by pasting prompts into chat interfaces, but production code must integrate with existing infrastructure, pass CI/CD pipelines, connect to databases, handle authentication, and deploy without breaking systems. Task-specific testing against your actual codebase reveals capability gaps that standard benchmarks miss completely.

- Orchids addresses this by connecting your existing ChatGPT, Claude, Gemini, or GitHub Copilot subscription to a full-stack execution layer that handles planning, debugging, command execution, database connections, authentication, and deployment while you control which model runs your prompts.

Are All Coding LLMs Equally Capable?

No. Coding LLMs are significantly different in important ways. They have different designs, training approaches, and thinking depth, leading to clear performance differences in real development work. Their specialization, context handling, and problem-solving methods lead to substantial variation in how models learn from similar training data.

Key Point: The specialization level of a coding LLM directly impacts its ability to understand complex programming contexts and generate accurate solutions.

Warning: Assuming all coding LLMs perform equally can lead to poor tool selection and suboptimal development outcomes for your specific use case.

How do model architectures create different coding capabilities?

General-purpose models like GPT-4o excel at writing code in conversation but struggle with debugging multi-file applications or identifying logic errors across large codebases. Specialized models like Codestral prioritize code syntax and structure, trading flexibility for accuracy.

According to The State Of LLMs 2025, DeepSeek-V3 achieved 65.2% on SWE-Bench Verified, outperforming Claude 3.5 Sonnet's 49.0%, demonstrating how model architecture directly affects performance on real-world tasks. Reasoning models break problems into visible steps, allowing you to catch errors before code deployment. Direct-answer models skip this step, making them faster but riskier for complex, multi-stage work.

Why does context window size matter for coding tasks?

The size of a context window directly affects what a model can do. A model that can process 100,000 tokens can examine how a new authentication function works with existing middleware, database schemas, and API routes across dozens of files.

Smaller context windows force you to feed code in pieces, losing the connections that reveal architectural conflicts or security gaps. Gemini 1.5 Pro's large context window enables changes across an entire repository, while limited-window models require manual code segmentation and reassembly.

Why do models struggle with debugging tasks?

Code generation benchmarks receive significant media attention, but debugging separates capable models from marketing hype. Research shows models identify faults in only 74% of debugging tasks, with performance dropping sharply when semantic-preserving mutations like variable renaming appear. A model might generate a working API endpoint but fail to spot why that same endpoint throws intermittent 500 errors under load.

How do different models handle specific debugging scenarios?

Different models have distinct failure modes and blind spots. A prompt that causes Claude to fabricate package versions might work perfectly in GPT-4o, while Gemini excels at SQL optimization but struggles with Rust memory management. Teams practising "model musical chairs" switch between LLMs for the same task, using whichever LLM best handles the specific situation.

Why does the IDE amplify whichever model you choose?

The combination of tool and model determines outcomes more than the model alone. An LLM generating code cannot run tests, execute shell commands, or integrate with your existing CI/CD pipeline. Platforms like Orchids let you leverage your existing ChatGPT, Claude, Gemini, or GitHub Copilot subscription while adding full-stack capabilities for planning, debugging, command execution, and integrations. The IDE becomes the force multiplier, converting conversational prompts into deployed applications.

But here's what the benchmarks and marketing materials won't tell you about how these models behave under real development pressure.

Related Reading

How Coding LLMs Actually Perform (Mechanism and Benchmarks)

Coding LLM benchmarks

Benchmarks convert vague impressions into measurable evidence. When choosing between Claude, GPT-4, or DeepSeek, you need numbers showing real-world performance on tasks matching your workflow: debugging legacy codebases, handling multi-file edits across languages, or solving new problems.

In 2026, scores depend on both the model and the agentic scaffold used. Claude Code, Codex CLI, and custom builds produce different results from the same underlying model. Always note evaluation conditions when comparing numbers across sources.

SWE-bench Verified

This is the industry standard for testing AI code model performance. It measures model performance on real GitHub issues from active repositories. Ahead of AI reports that DeepSeek R1 achieved 79.8% on SWE-Bench Verified, while OpenAI o3 scored 71.7%. However, SWE-Bench Verified underwent a major upgrade in February 2026 (v2.0.0), making scores before and after incomparable and complicating progress tracking.

SWE-Bench Pro

Think of this as the reality check: a harder, contamination-resistant version sourced from complex real-world codebases. Even top frontier models score only around 23% here, compared to 70%+ on the Verified Scale. That gap reveals how much standard scores are inflated by training data leakage. SWE-Bench Pro strips away that advantage and shows what models can do when faced with genuinely new challenges.

Terminal-Bench 2.0 and LiveCodeBench

Terminal-Bench 2.0 covers 89 tasks in real terminal environments drawn from actual developer workflows: system architecture, dependency management, environment configuration—the messy work that doesn't fit neatly into unit tests. LiveCodeBench takes a different approach by continuously pulling new competition-level coding problems so models can't train their way to a high score, providing a strong signal for algorithmic and competitive programming ability since the benchmark evolves faster than training cycles.

Aider Polygot and HumanEval

Aider Polyglot tests how well models can edit source files across multiple programming languages using diffs—a practical measure of real-world code editing skills where you're modifying existing systems rather than writing new code from scratch. HumanEval, OpenAI's classic benchmark of 164 Python problems with unit tests, remains widely used despite advanced models now scoring 90%+, which limits its ability to differentiate between top performers. BigCodeBench offers a newer alternative with more challenging function-level tasks across a broader range of real-world libraries and applications.

What makes WebDev Arena, ARC-AGI-2, and Chatbot Arena different?

WebDev Arena runs head-to-head human preference Elo for frontend and full-stack tasks, with humans judging output rather than automated tests, providing the best signal for UI quality and real-world web development. ARC-AGI-2 tests novel pattern recognition that models cannot memorise, making it one of the most meaningful benchmarks for raw reasoning ability. Chatbot Arena uses blind Elo voting from real humans across coding and general tasks, reflecting what people prefer over synthetic metrics.

How do benchmark scores translate to real development work?

But here's the tension: you can study every benchmark and still struggle to turn those numbers into productive development time. The best LLM for coding isn't about raw performance on isolated tasks—it's about how that model integrates into your actual workflow.

How do architectural differences affect coding performance?

How well coding LLMs work depends on their architecture, training data, and reasoning approach. Decoder-only architectures generate code sequentially, making them fast and efficient. Encoder-decoder models process all code context bidirectionally before generating output, enabling better understanding of complex cross-file dependencies but at the cost of slower inference. Training on datasets like HumanEval, MBPP, and APPS improves code correctness, though real-world performance often differs from these controlled benchmarks.

Why do benchmark leaders still fail at production tasks?

According to The State Of LLMs 2025, DeepSeek R1 achieved 79.8% on SWE-Bench Verified, while OpenAI o3 scored 71.7%. Yet both models struggle with asynchronous state management and multi-threaded race conditions. The gap between benchmark performance and production reliability arises when refactoring authentication logic across microservices or debugging caching-layer corruption of session data.

Benchmarks measure what models can do in isolation. Real development requires understanding how systems work together under load, across time zones, and with undocumented legacy constraints.

Why do benchmark scores drop so dramatically on contamination-resistant tests?

Top frontier models score 70%+ on SWE-Bench Verified but drop to roughly 23% on SWE-Bench Pro, the contamination-resistant variant sourced from complex real-world codebases. This 47-point gap reveals how standard benchmarks inflate performance through training data leakage: models memorize solutions to popular GitHub issues and repeat them when similar patterns appear in evaluations.

How do scaffold differences make benchmark comparisons meaningless?

The SWE-Bench Verified scaffold received a major upgrade in February 2026 (v2.0.0), making scores from before and after incomparable. One vendor's "79% on SWE-Bench" might use Claude Code's agentic scaffold with full repository access and multiple debugging attempts, while another's "71%" might reflect raw model output with no tool assistance. The score alone reveals little without knowing the scaffold, context window size, retry logic, and whether the model had access to test outputs during generation.

Which specific tasks do models consistently fail?

Research analyzing code generation benchmarks found 114 tasks where large language models consistently struggle. Failures cluster around tracking state changes, pointer arithmetic in memory-unsafe languages, and backtracking algorithms with multiple exit paths.

A model might create a binary search tree insertion function that compiles and passes half the test cases, then corrupts tree balance during edge-case deletions because it lost track of parent node references across recursive calls.

How do production environments expose model weaknesses?

Terminal-Bench 2.0 reveals another problem: 89 tasks from real developer workflows covering system architecture, dependency management, and environment configuration. Models scoring 90%+ on HumanEval often fail to set up Docker networking for multi-container applications or diagnose why a Python package installs but imports fail due to conflicting transitive dependencies.

The gap between "writes code that follows the rules" and "understands how software runs in production" remains wide enough to make fully independent development impractical beyond isolated functions.

How do specialized benchmarks reveal true model capabilities?

LiveCodeBench continuously pulls new competition-level coding problems to prevent models from training on benchmarks, making it the strongest signal for algorithmic and competitive programming ability. Aider Polyglot tests models' ability to edit source files across multiple programming languages using diffs, providing a practical signal for real-world code editing workflows.

WebDev Arena ranks models through head-to-head human preference voting on frontend and full-stack tasks, with Gemini 3.1 Pro currently leading for UI quality. Chatbot Arena's blind Elo voting across coding and general tasks remains the most reliable measure of real-world output quality because humans evaluate the final result rather than intermediate benchmark performance.

Why does the model and tooling combination matter more than individual performance?

The combination of model and tooling determines outcomes more than either factor alone. Platforms like Orchids let you leverage whichever LLM best handles your specific context—ChatGPT for conversational iteration, Claude for large codebases, Gemini for SQL optimization—while adding the full-stack scaffolding for planning, debugging, command execution, and integrations that transform conversational prompts into deployed applications.

The IDE handles architecture decisions, dependency resolution, and workflow orchestration that the LLM cannot manage independently, making "which model is best" less important than "which model plus tooling combination solves this specific problem fastest." Knowing which models exist and what they excel at requires looking past marketing claims to measurable, task-specific performance.

Related Reading

- Hire an App Developer

- Softr Alternatives

- Vs Code Alternatives

- AI Tools For Product Managers

- Replit Alternatives

- Mobile App Ideas

- Appsheet Alternatives

12 Best LLMs for Coding (Tools That Make Your Life Easier)

The best coding LLM depends on what you're building, how you work, and which constraints matter most. A model that excels at generating React components might struggle with debugging legacy Java threading issues. One that handles entire repositories brilliantly might cost 10x more than necessary for routine refactoring. The right choice emerges when you match specific capabilities—context handling, reasoning depth, language support, cost structure—to your actual work.

1. Orchids

Orchids lets you build and ship real applications fast through an AI-powered IDE that works across any language and framework. It's a full-stack development environment where you bring your own LLM subscriptions (ChatGPT, Claude, Gemini, GitHub Copilot) rather than being locked into a single provider.

Key capabilities

- Multi-LLM flexibility: Use your existing AI subscriptions instead of paying for another proprietary model, accessing whichever frontier LLM performs best for your task.

- Full-stack scope: Build web apps, mobile apps, scripts, bots, and extensions, not just frontend mockups or static sites.

- Complete deployment pipeline: One-click deployment, custom domains, and automatic migration to your own Vercel account when ready to scale.

- Database and auth integration: Connect your preferred database and authentication stacks, and integrate payments without wrestling with configuration.

- Import existing code: Bring in current projects, run security audits, and continue building rather than starting from scratch.

- Background agents: Jira, Linear, and Slack integrations that keep project management synchronised without manual updates.

- #1 on App Bench and UI Bench: Ranked first for application generation quality and user interface accuracy.

Best when

You need a complete coding agent that handles planning, debugging, command execution, and deployment across any stack, rather than a chat interface that generates code snippets requiring manual integration.

Start building your first app free at Orchids.

2. OpenAI GPT LLMs for coding

GPT-5.4 (released March 5, 2026) represents OpenAI's first general-purpose model with native computer use capabilities. It builds on GPT-5.3-Codex's coding strength and extends it into a model designed for sustained professional workflows across software environments.

Key capabilities

- Native computer use: First OpenAI model that autonomously navigates applications, operates computers, and executes multi-step workflows via Codex and the API

- 1M token context: Available in API and Codex, enabling agents to plan, execute, and verify tasks across long coding sessions without losing context

- Token efficiency: Uses up to 47% fewer tokens on tool-heavy tasks compared to GPT-5.2, resulting in faster speeds and lower API costs

- Upfront planning: In ChatGPT, GPT-5.4 Thinking displays reasoning plans before completing responses, letting you course-correct mid-response without restarting

- Reduced hallucinations: According to Vellum's LLM comparison, individual claims are 33% less likely to be false compared to GPT-5.2, with full responses 18% less likely to contain errors

- Knowledge work benchmarks: 83% on OpenAI's GDPval benchmark, with record scores on OSWorld-Verified and WebArena Verified

- Professional domain strength: 91% on BigLaw Bench; preferred by 87.3% of investment banking modelers over GPT-5.2

- GPT-5.4 Pro: Higher-performance variant for ChatGPT Pro and Enterprise users, achieving 89.3% on BrowseComp and 83.3% on ARC-AGI-2

- Deployment: Available across ChatGPT (as GPT-5.4 Thinking for Plus, Team, and Pro users), the API (as gpt-5.4), and Codex.

Best when

Frontend development, document-heavy professional workflows, and agentic tasks requiring computer interaction across multiple applications.

3. Claude Sonnet 4.6

Claude Sonnet 4.6 (released February 17, 2026) is now the default model on claude.ai for Free and Pro users. It delivers Opus-class performance on most tasks at a fraction of the cost. Developers with early access preferred it to Sonnet 4.5 by a wide margin and preferred it over Opus 4.5 (Anthropic's previous frontier model) 59% of the time in Claude Code testing.

Key capabilities

- SWE-bench Verified: 79.6% in standard mode, up from Sonnet 4.5's 77.2%

- Computer use leadership: 72.5% on OSWorld-Verified, with major improvements in prompt injection resistance versus prior Sonnet models

- Adaptive thinking: New default reasoning mode that dynamically decides when and how much to think, eliminating manual extended thinking configuration for most tasks

- 1M token context (beta): Holds entire codebases, lengthy contracts, or dozens of research papers in a single request

- 64K output tokens: Supports generation of complete applications, comprehensive refactors, and large documentation sets

- Better long-session coding: Users report fewer false claims of success, fewer hallucinations, and less over-engineering or logic duplication versus Sonnet 4.5

- OfficeQA: Matches Opus 4.6 on enterprise document comprehension (charts, PDFs, tables), extending capability to non-engineering knowledge work

- API pricing: $3 per million input tokens, $15 per million output tokens (same as Sonnet 4.5)

In Claude Code testing, users rated Sonnet 4.6 as significantly better at reading context before modifying code, consolidating shared logic rather than duplicating it, and following multi-step instructions through to completion.

Best when

Production coding workflows require sustained context awareness, multi-file refactoring, and reliable adherence to instructions across long sessions.

4. Google Gemini 2.5 Pro

Google Gemini 2.5 Pro is designed for large-scale coding projects, featuring a 1,000,000-token context window that enables it to handle entire repositories, test suites, and migration scripts in a single pass. It excels at generating, debugging, and refactoring code across multiple files and frameworks.

Key capabilities

- Code generation: Creates new functions, modules, or entire applications from prompts or specifications

- Code editing: Applies targeted fixes, improvements, or refactoring directly within existing codebases.

- Multi-step reasoning: Breaks down complex programming tasks into logical steps and executes them reliably.

- Frontend/UI development: Builds interactive web components, layouts, and styles from natural language or designs.

- Large codebase handling: Understands and navigates entire repositories with multi-file dependencies.

- MCP integration: Supports Model Context Protocol for smooth use of open-source coding tools

- Controllable reasoning: Adjusts depth of problem solving ("thinking mode") to balance accuracy, speed, and cost.

Pros

- Excels at generating full solutions from scratch

- Handles large codebases with 1M-token context

- Strong benchmark performance in coding tasks

- Deep Think boosts reasoning for complex problems

Cons

- Weaker at debugging and code fixes

- Sometimes hallucinates or changes code unasked

- Verbose outputs and format inconsistencies

Best when

Generating complete applications from specifications, navigating large multi-file repositories, or building frontend interfaces from design mockups.

5. DeepSeek V3.1/R1

"Open-source reasoning powerhouse with MIT licensing."

Released in early 2025, DeepSeek's V3.1 and R1 models offer complete freedom to modify, inspect, and deploy without restriction. The R1 variant uses reinforcement learning to generate internal reasoning chains and self-check answers on multi-step problems that require precision.

Key capabilities

- Mixture-of-experts architecture activates only necessary model components per query, keeping inference costs lower while maintaining 128k context windows.

- Self-certification generates step-by-step reasoning chains, particularly effective for mathematical proofs and algorithmic problem-solving.

- Quantization support enables deployment on consumer hardware without significant performance degradation.

- Multilingual training across English and Chinese expands applicability beyond English-centric codebases.

Real-world deployment

Use "Think" mode for complex architectural decisions that require seeing the reasoning behind them; switch to standard mode for routine code generation. This works best for teams that need to audit model decisions or fine-tune for domain-specific coding patterns.

6. Meta Llama 4 Scout

"10-million-token context for repository-scale analysis"

Meta released Scout (17B parameters) in April 2025 with a context window that fundamentally changes what "understanding your codebase" means. It's a 10-million-token capacity tool that handles entire repositories, dependency graphs, and multi-file refactoring without losing context across files.

Key capabilities

- Repository-level comprehension tracks dependencies, function calls, and data flow across dozens of files simultaneously.

- Multimodal input processes code alongside documentation, diagrams, and natural language specifications.

- Function calling generates structured JSON outputs for API integration and tool orchestration.

- Local deployment runs efficiently on developer workstations, eliminating API latency and privacy concerns.

Performance context

According to Vellum's LLM coding benchmarks, Gemini 3 Pro achieves 79.7% on Live CodeBench. Scout's improvements over Llama 3 on MBPP place it in similar territory for practical coding tasks.

When to deploy

Teams working on legacy codebases find Scout's extended context invaluable. Instead of manually explaining how five different modules interact, load the entire subsystem and ask architectural questions. The model identifies patterns across files that would take hours to trace manually.

Available as an open-weight download for local deployment or through cloud providers, with Apache 2.0 licensing that permits commercial use and modification.

7. Mistral Codestral 25.01

95.3% first-attempt accuracy on code completion

Released January 2025, Codestral 25.01 optimizes for the interruption-driven reality of coding: you're mid-thought, cursor blinking, and you need the next three lines now. The model achieves 95.3% FIM (fill-in-the-middle) pass@1 success across Python, Java, JavaScript, and 77 other languages.

Key capabilities

- Improved tokenizer and architecture deliver 2x faster generation compared to Codestral 2405

- Low-latency design handles high-frequency completion requests without degrading response time.

- Cross-language proficiency maintains consistent quality across Rust, TypeScript, and Go.

- Context-aware completion understands surrounding code structure, not just immediate lines.

Deployment pattern

The speed advantage matters most in tight feedback loops. When rapidly prototyping or refactoring under time pressure, the difference between 200ms and 400ms response time compounds across hundreds of completions per hour.

Available through Mistral's API with tiered pricing based on request volume, it integrates with major IDEs through standard completion protocols.

8. Kimi K2.5

Visual coding at $0.60/M tokens

Moonshot AI's K2.5 reached 50 billion tokens per day on OpenRouter within hours of release in early 2025. Developers adopted it for production workflows because it converts UI designs and screen recordings directly into working front-end code.

Key capabilities

- Visual-to-code translation processes Figma mockups, screenshots, and screen recordings into React, Vue, or vanilla JavaScript.

- Agent Swarm mode spawns parallel sub-agents for complex tasks, each handling discrete subtasks simultaneously.

- Vellum's coding benchmarks show Claude Sonnet 4.5 achieving 82% on SWE Bench, while K2.5 reaches 76.8% at one-tenth the cost ($0.60/M vs $6.00/M for Claude Opus 4.6).

- Kimi Code CLI competes directly with Claude Code, integrating with VSCode, Cursor, and Zed through open-source tooling.

Cost-performance reality

When prototyping multiple UI approaches or maintaining design systems with hundreds of components, the 10x cost difference determines what's economically feasible. Teams use K2.5 for initial implementation, then apply premium models for complex edge cases.

Available through OpenRouter, direct API, and CLI integration. The model features a 1-trillion-parameter mixture-of-experts architecture with 17B active parameters per request.

9. Qwen 3.5

Beats GPT-5.2 on instruction following at $0.40/M

Alibaba's Qwen 3.5, released February 16, 2026, outperforms premium models on instruction following: Qwen 3.5 at 76.5, GPT-5.2 at 75.4, Claude at 58.0.

Key capabilities

- Apache 2.0 licensing enables self-hosting, modification, and unrestricted commercial deployment.

- 397B total parameters with 17B active (MoE architecture) balances capability with inference efficiency

- LiveCodeBench v6 score of 83.6 and AIME 2026 score of 91.3 demonstrate strong mathematical reasoning.

- Smallest variants run on consumer hardware, enabling local deployment for individual developers.

Deployment economics

At $0.40/M input tokens, Qwen 3.5 costs 10-17x less than Claude or GPT for comparable tasks. For teams processing millions of tokens daily, this cost difference can fund additional infrastructure. Available through OpenRouter, Hugging Face, and self-hosted deployment, it supports 201 languages and delivers strong performance across major programming languages.

10. GLM-4.7

Thinking-before-tool-use for agentic reliability

Z.ai's GLM-4.7 solves a core problem with agentic coding: models that jump to tool use before understanding the task. GLM-4.7's thinking-before-tool-use behaviour adds a reasoning step, improving the reliability of multi-file refactoring.

Key capabilities

- The pre-tool reasoning phase analyzes task requirements before selecting and executing tools.

- Strong performance in real-world coding environments where context matters more than raw speed.

- Cost-effective deployment through Z.ai, OpenRouter, and major agent frameworks

- Available in Google Cloud Vertex AI Model Garden for enterprise integration

When it matters

Developers working on constrained hardware prefer GLM-4.7 for complex refactoring tasks. The thinking step prevents cascading errors when a model executes the wrong approach across multiple files. It's accessible through cloud APIs and local deployment.

11. Devin: Delegation Mode

Devin feels like handing work off rather than pair-programming. You describe a task, check in periodically, and let it explore, implement, test, and iterate without constant supervision. Vellum's benchmark data shows Kimi K2 Thinking model hitting 83.1% on Live CodeBench, demonstrating that autonomous coding agents can handle complex, multi-step tasks with minimal human intervention.

Great for long-horizon work where tight steering creates more friction than value. If you're refactoring a legacy module or building a feature that requires multiple iterations, Devin's persistence outweighs the need for looser control. Without periodic reviews, you risk large diffs and cleanup debt that erases time saved. Best when the task is substantial enough that constant back-and-forth would slow you down more than occasional course correction.

12. Builder: Frontend Shipping Mode

Builder treats UI as the product, not the code that generates it. "Done" is when the rendered UI matches intent, not when code compiles. Visual verification catches "almost right" changes early, before they compound into rework. Design-system grounding keeps spacing, tokens, and component intent aligned, reducing drift that turns polished mockups into inconsistent production interfaces.

Review improves because verification anchors to what renders, not what someone claimed changed. According to Vellum's performance analysis, Claude Sonnet 4.5 achieves 82% on SWE Bench. Builder pairs that capability with automatic PR shipping and background agents for Jira, Linear, and Slack, converting UI fixes into deployable updates without manual handoffs.

Best when frontend engineering, design-system work, or UI regressions are the bottleneck. If the real risk is visual drift rather than logic errors, Builder's rendering-first approach prevents problems that code review alone misses.

Our AI app generator lets you use your existing subscriptions (ChatGPT, Claude, Gemini, GitHub Copilot) or any API key, so the environment adapts to the problem instead of forcing the problem to fit the tool. Planning, debugging, running commands, and integrations remain consistent across models.

The tools that make your life easier disappear into your workflow, handling repetitive tasks while you focus on decisions only you can make. But knowing which tool to reach for matters only if you understand when automation helps and when it hinders.

How to Measure LLM Performance for Your Projects

Pick a coding task you already know the answer to. Run it through two or three different models and compare the results. This is the fastest way to understand which LLM handles your specific context best, because performance isn't about abstract benchmarks: it's about whether the model solves your problem correctly, quickly, and without creating new problems.

Key Point: The most reliable way to evaluate LLM performance is through real-world testing with your actual use cases, not theoretical benchmarks.

"Performance isn't about abstract benchmarks—it's about whether the model solves your problem correctly, quickly, and without creating new problems."

Warning: Don't rely solely on published performance metrics. What works for general tasks may not work for your specific coding requirements and project constraints.

What criteria should you prioritize when testing code generation?

Code correctness matters most: Does the generated function compile? Pass your existing test suite? Handle edge cases without crashing? Runtime efficiency comes next—a solution taking 10 seconds when your current implementation runs in 200 milliseconds isn't working.

Debugging and refactoring capability separates models that write code from models that understand code. Can it trace why your authentication middleware throws intermittent 500 errors under load, or does it merely suggest rewriting the entire auth layer?

How do you effectively evaluate multilingual capabilities?

Multi-language versatility reveals whether you're choosing a specialist or generalist. A model that excels with Python data pipelines might struggle with Rust memory management or SQL query optimization.

According to Ahead of AI, task-specific testing against your actual codebase reveals capability gaps that benchmarks miss. If you're building microservices in Go, test on Go. If you're refactoring a legacy Java monolith, test on that monolith's structure.

How do you create an effective testing framework?

Take a real function from your current project—the API endpoint parsing webhook payloads, the database query combining user activity, or the React component handling form validation—and generate the same solution using two or three different LLMs.

What metrics should you measure for comparison?

Measure how long it takes to complete, whether it works correctly (by running your existing test suite), and how easy it is to read (would you accept this in a pull request without major changes). The model with the highest score across all three areas wins for that task.

Why should you test different tasks separately?

Try a different task next: the model that excels with React components might struggle with optimising Postgres queries. Many developers switch among different LLMs while working on a project, using whichever performs best for their current task rather than sticking with a single model.

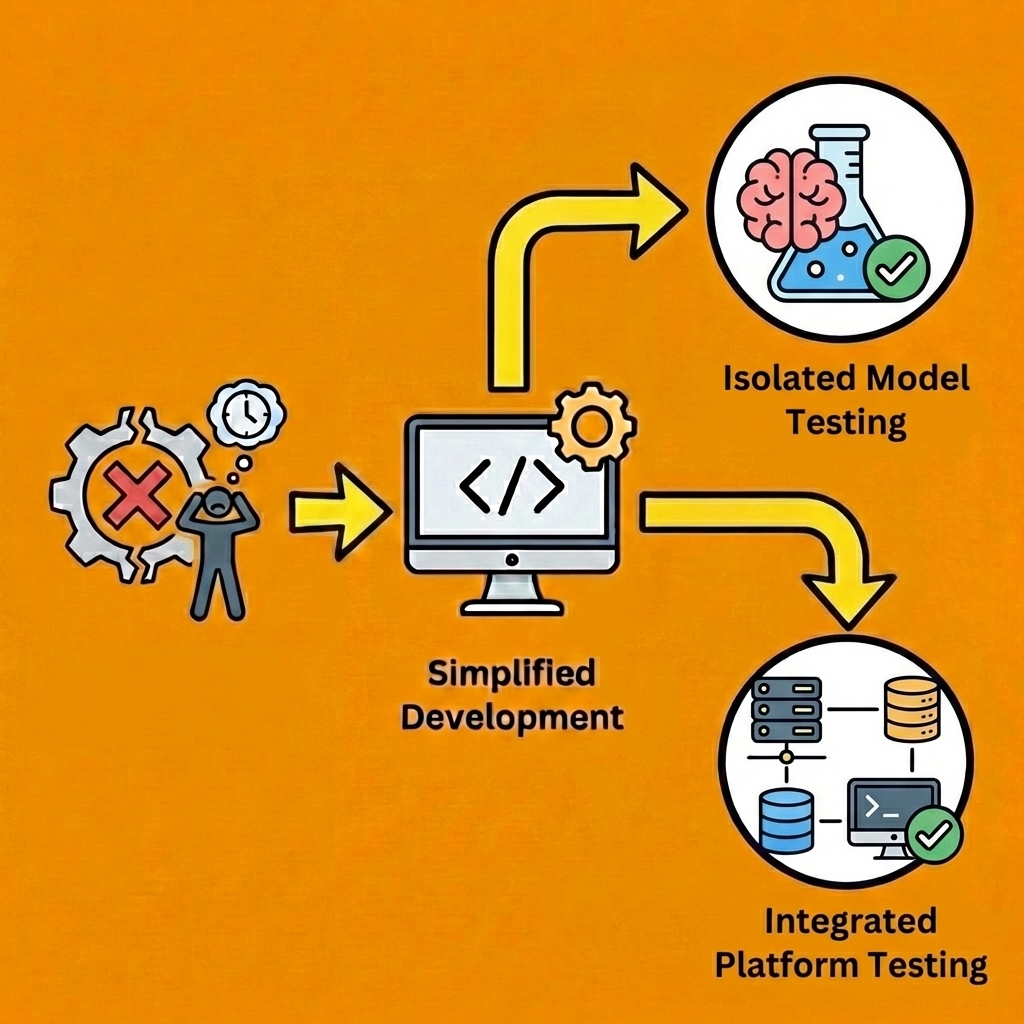

Why isolated model testing misses the point

Most developers test LLMs in isolation, pasting prompts into a chat interface and evaluating raw output. But you don't ship code from a chat window. You ship code that integrates with existing infrastructure, passes CI/CD pipelines, connects to databases, handles authentication, and deploys to production environments.

The combination of model and tooling determines outcomes more than the model alone. Platforms like Orchids let you test whichever LLM subscription you already have (ChatGPT, Claude, Gemini, GitHub Copilot) while adding full-stack execution around planning, debugging, command execution, and deployment. The IDE handles architecture decisions, dependency resolution, and workflow orchestration that the LLM cannot manage independently, making "which model scored highest on my test" less important than "which model plus tooling combination shipped working code fastest."

What metrics actually matter for model selection?

Research from LinkedIn identifies seven categories of metrics for measuring LLM performance, though most matter only if you're training or fine-tuning models. For developers choosing between existing LLMs, three metrics drive every decision: does it work, how fast it works, and how much fixing it requires afterward.

But knowing how to test models matters only if you understand what happens when the model fails and how to recover without starting over.

Related Reading

- No Code AI Tools

- Best Mobile App Builder

- How To Create An App

- AI App Builders

- Lovable AI

- Best Vibe Coding Tools

Stop Wasting Hours Debugging — Let AI Handle the Heavy Lifting

Picking the right LLM for coding matters less than what you do with it. The fastest way to find which model works for your project is to build something real now and measure whether you shipped faster than you would have manually.

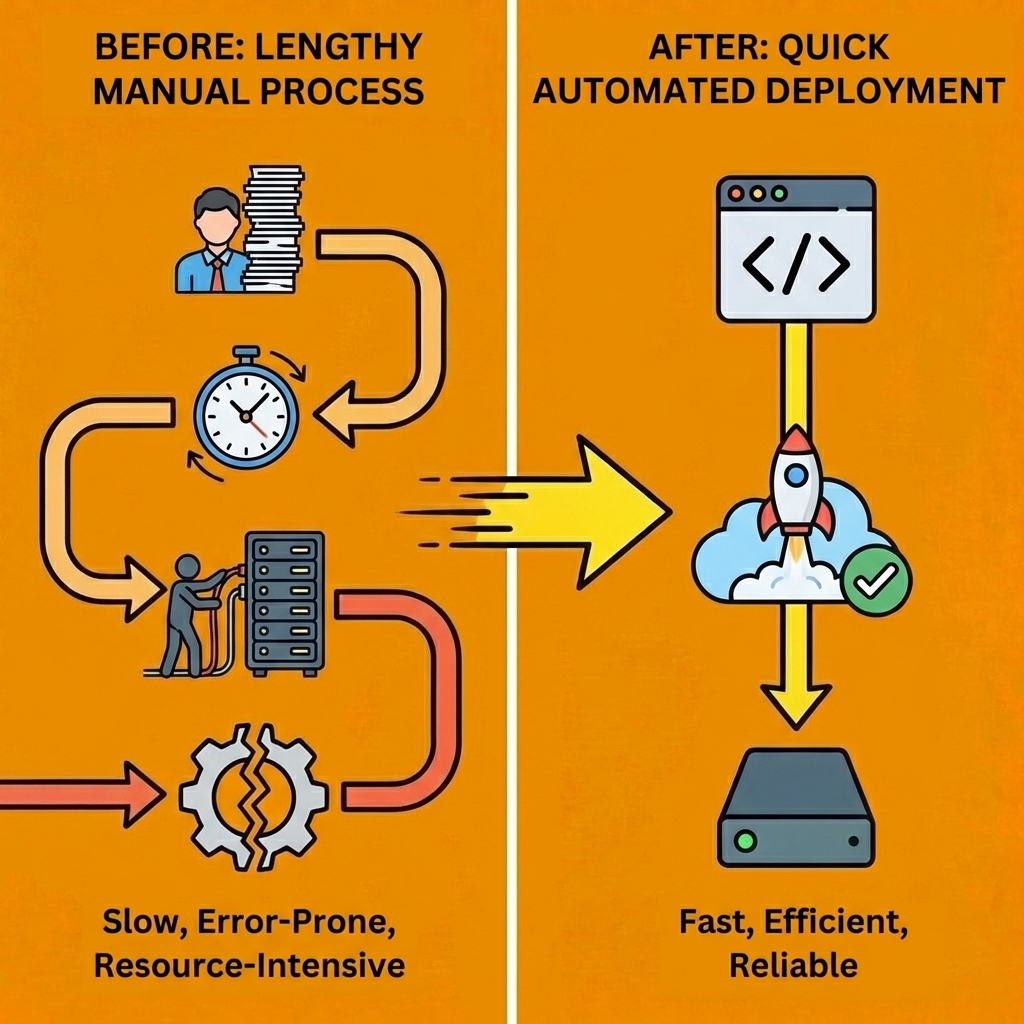

Tip: Most developers test models by themselves, then wonder why production integration takes three times longer than expected. The model writes clean code, but you still need to set up environments, connect databases, wire up authentication, run security audits, and deploy without breaking existing infrastructure. Testing a model's raw output tells you almost nothing about whether it will actually speed up your workflow.

"Testing a model's raw output tells you almost nothing about whether it will actually speed up your workflow." — Real-world integration challenges, 2024

Platforms like Orchids eliminate that gap by letting you bring your existing LLM subscription (ChatGPT, Claude, Gemini, or GitHub Copilot) while handling the full execution layer. You control which model runs your prompts and what you pay for API calls. Orchids handles planning, debugging, terminal commands, database connections, authentication setup, payment integrations, and one-click deployment. Your chosen model, plus the IDE's scaffolding, ships working applications faster than either tool alone.

| Manual Testing | Integrated Platform |

|---|---|

| Model output only | Full execution layer |

| 3x longer integration | One-click deployment |

| Manual environment setup | Automated scaffolding |

Key Point: Start with a task you already solved manually last week. Generate the same solution using your preferred LLM inside a tool that can actually execute, test, and deploy it. Measure time saved, bugs avoided, and whether you'd merge the output without major revisions.

Warning: The best LLM for coding is whichever one you're actually using to ship code today. Build your first app for free and find out which model works best by watching what ships, not what scores highest on benchmarks.

Bilal Dhouib

Head of Growth @ Orchids